Sparse categorical cross entropy12/2/2023

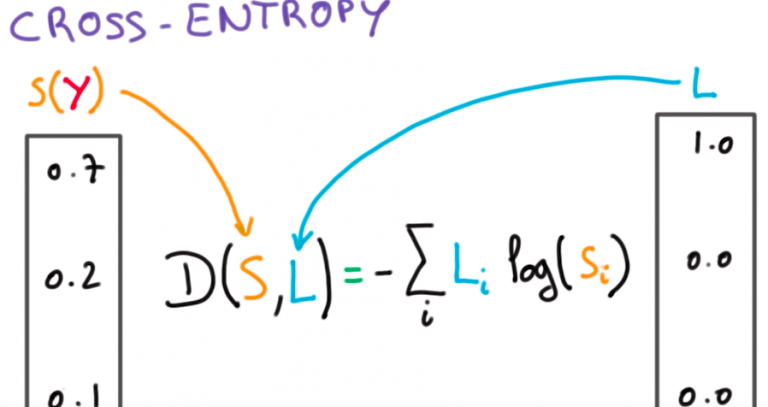

Here you are letting TensorFlow perform the softmax operation for you. When one does not intend to use the softmax function separately but would prefer including it in the calculation of the loss function. Loss_1 = loss_taking_prob(y_true, nn_output_after_softmax) Loss_taking_prob = tf.(from_logits=False) So here TensorFlow assumes that whatever the input that you will be feeding to the loss function are the probabilities, so there is no need to apply the softmax function. When one is explicitly using softmax (or sigmoid) function, then, for the classification task, then there is a default option in TensorFlow loss function i.e. Print(loss_from_scc_red, tf.math.reduce_mean(loss_from_scc_red)) Computing a reduce_mean on this list will give you the same result as with reduction='auto'. But it can be set to value 'none' will actually give you each element of the categorical cross-entropy, label*log(pred), which is of shape (batch_size). It is normally set to 'auto', which computes the categorical cross-entropy which is the average of label*log(pred). Reduction can be set to ‘auto’ or ‘none’. Sparse categorical Cross Entropy has two arguments namely, from_logits and reduction. Sparse Categorical Crossentropy is more efficient when you have a lot of categories or labels which would consume huge amount of RAM if one-hot encoded. Similar to this is the SparseCategoricalCrossentropy() loss function also applies a small offset ( 1e-7 ) to the predicted probabilities in order to make sure that the loss values are always finite. Some of the following nuggets have been collected from experience of data scientists facing errors while using sparse categorical entropy:Ī lot of people have dilemma that in some cases tf._crossentropy() do not yield the expected output but why this is so? Actually tf._crossentropy() applies a small offset ( 1e-7 ) to y_pred when it's equal to one or zero, that's why in some cases we are unable to get the expected output.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed